I spend my time developing new forms of intelligent systems that are capable of perceiving multi-modal data to work under complex real-world scenarios, while achieving extreme levels of efficiency. My research lies at the intersection of machine learning (ML) systems and data science, and primarily focuses on understanding the computational challenges of end-to-end ML systems from both algorithm and systems perspectives.

Earlier in my academic career, I have developed a variety of localisation, communication and coordination protocols for mobile sensing platforms, ranging from sensor networks/IoT, mobile/wearable devices, and robots.

Below are snapshots of the research projects I have worked on (with pointers to some relevant papers). For more detailed information about my work please see my publications.

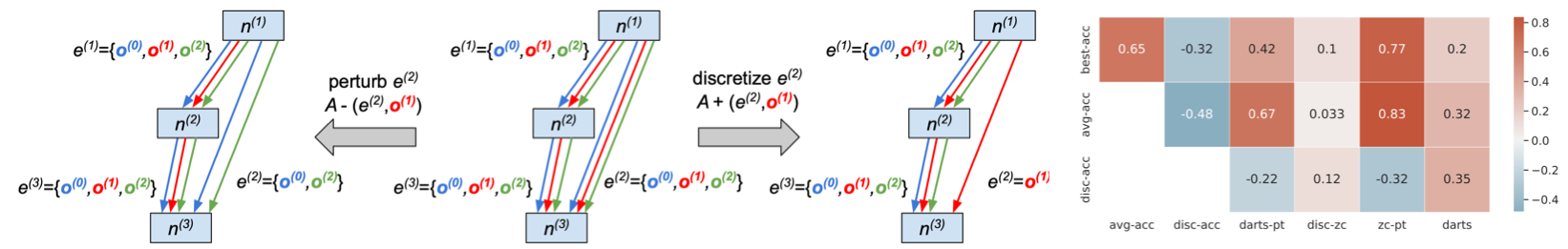

Efficient AutoML

Deployable On-device AI

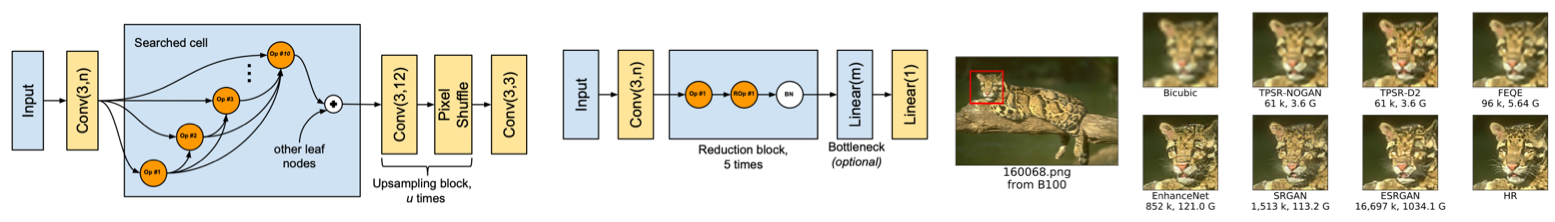

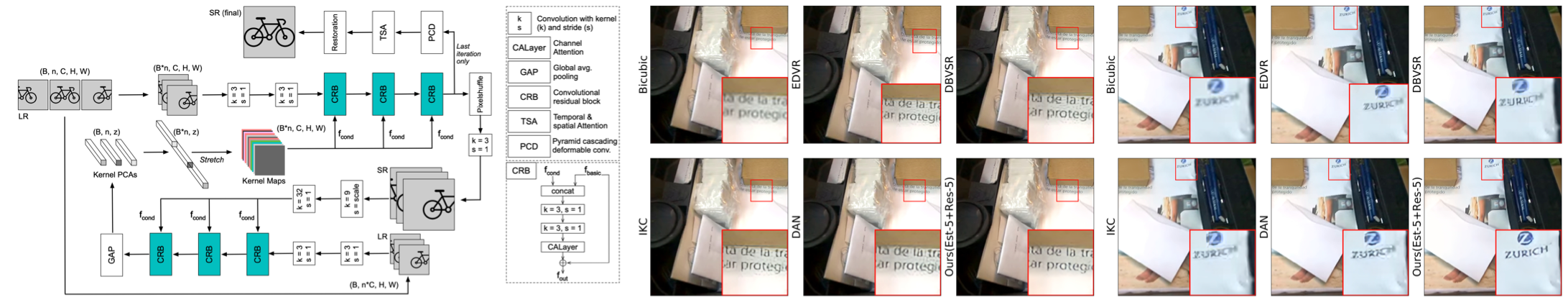

Blind Video Super Resolution

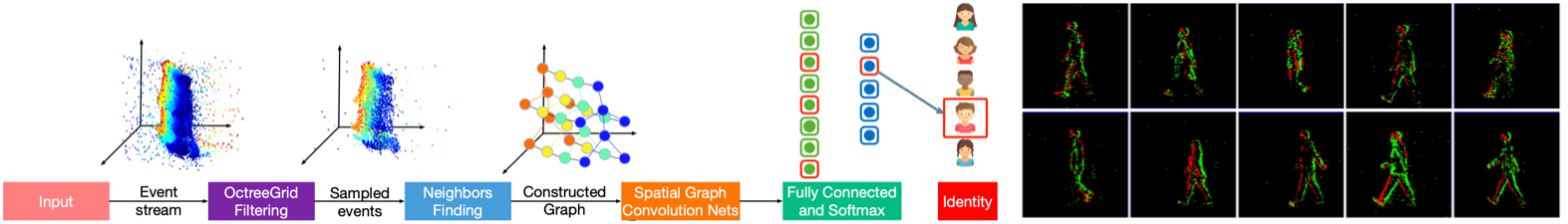

Event-based Motion Analysis

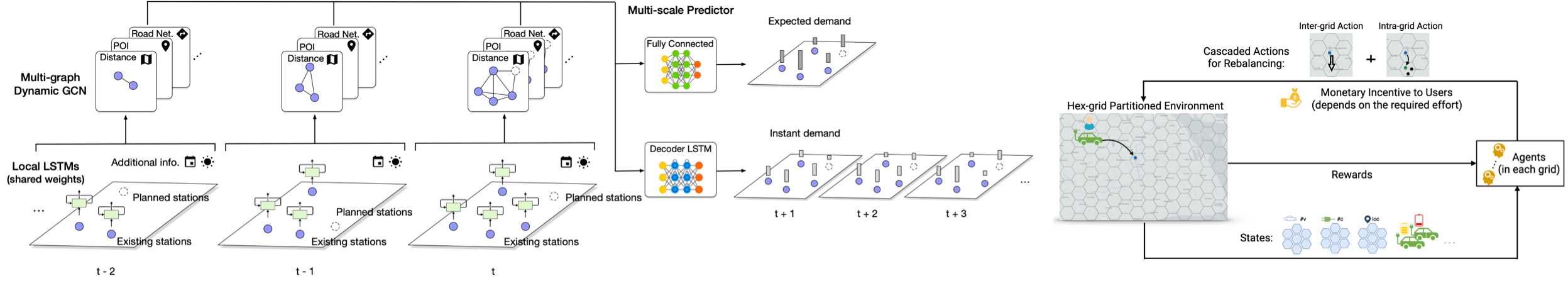

Spatio-temporal Analytics for Urban Mobility

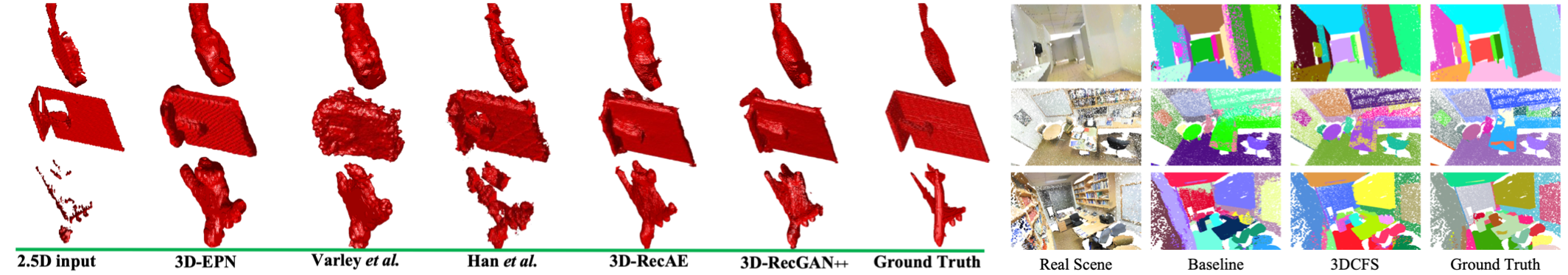

3D Segmentation & Reconstruction

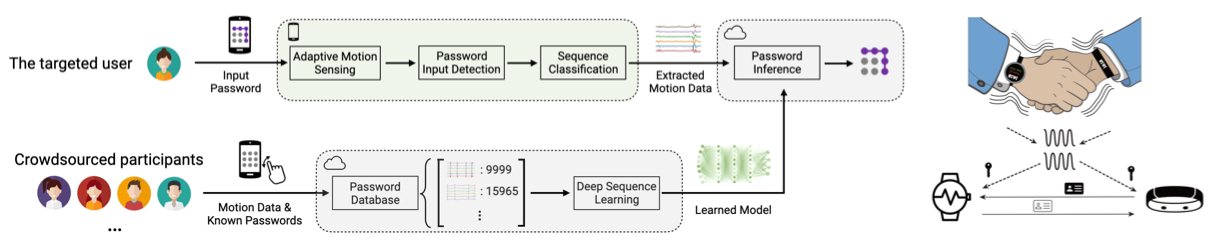

Security & Privacy on Mobile/Wearables/IoT

TOSN ‘20 TDSC ‘19 ISWC ‘18 IMWUT ‘17

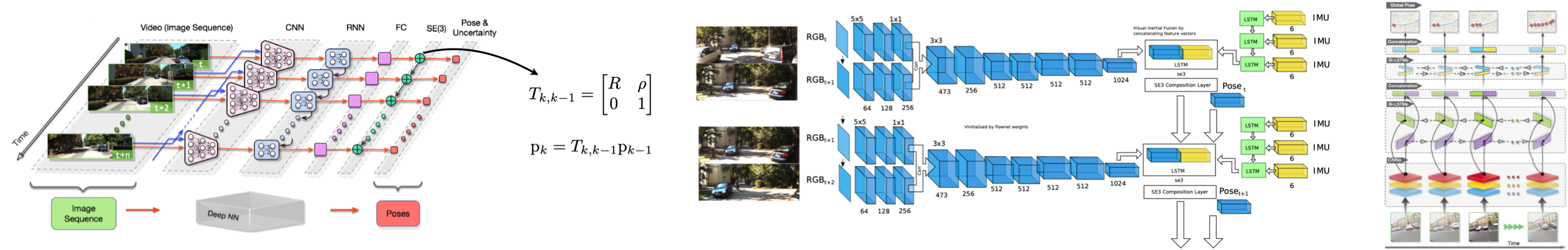

Deep Visual Tracking & Motion Estimation

IJRR ‘18 CVPR ‘17 AAAI ‘17 ICRA ‘17

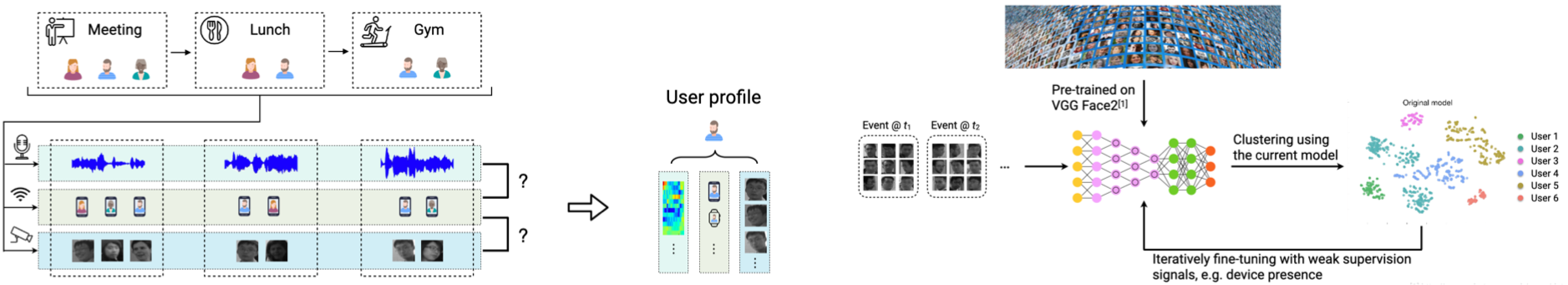

Cross-modality Association and Learning

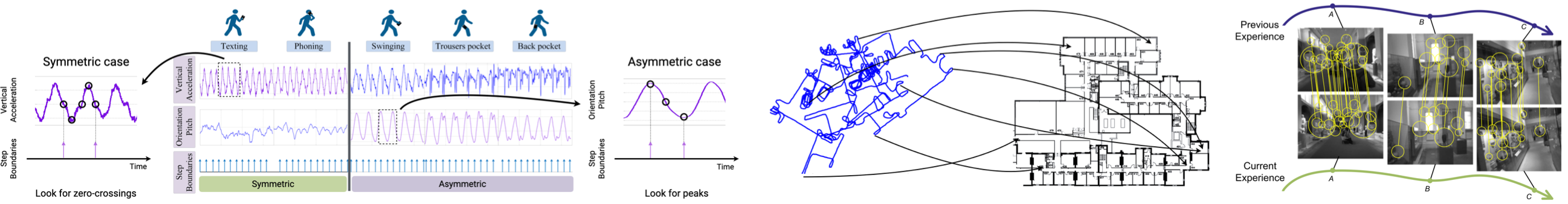

Localisation, Navigation & Mapping

TMC ‘19 JSAC ‘15 TMC ‘15 IPSN ‘14 (Best Paper)

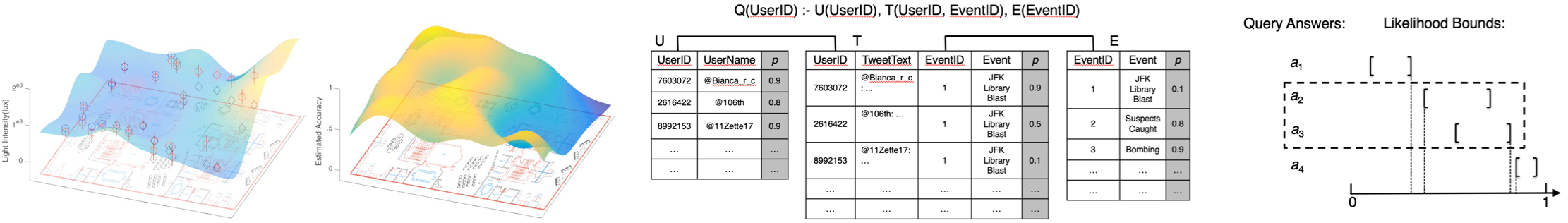

Probabilistic Data Management